“Practice IS everything. This is often misquoted as Practice makes perfect.” – Periander

You know that Learning by Doing is much more effective than learning by seeing, reading or hearing. (And if you don’t know this, read this post!) It is an interactive endeavor that puts students through the actual paces of the subject being taught.

Why then, does evaluation of this learning not adhere to the same rigorous standard?

Not an Effective Form of Testing

Once training is complete, the next step in the process is naturally Testing & Evaluation – and this often takes the form of a series of multiple choice questions or a random set of other non-active testing. This is not an accurate measure. When one Learns by Doing, it follows that they should be Tested by Doing, doesn’t it? And that this ‘doing’ should continue as part of a steady diet designed to keep skills sharp.

Unfortunately, most testing done today is preoccupied with checking boxes, even when it comes to evaluating visual and hands-on functions like Operations, Maintenance, Service and Repair that require understanding the process as a whole.

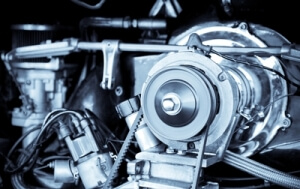

Let’s consider an actual example to illustrate: What would be the best way to test someone trained to replace a broken fan belt?

Let’s consider an actual example to illustrate: What would be the best way to test someone trained to replace a broken fan belt?

Answer: Have them repair a broken fan belt! Taking a test about the repair wouldn’t be nearly as effective, nor would it provide an accurate evaluation of the required skill.

There are roadblocks to performing this type of evaluation, of course:

First, having a supply of broken fan belts available to test on would prove difficult, particularly as correctly replacing it just once sometimes wouldn’t prove mastery. (A three out of three score would satisfy that requirement though.)

Second, we would need an instructor available as the student tested, capable of providing individualized feedback, so that if the student has trouble, the instructor could provide hints or log exactly where the student went wrong. This would be cost prohibitive to implement at scale.

Third, just because someone could do it today, doesn’t mean they will be able to perform the procedure accurately two years from now. Re-certification and periodic testing is key. Again, re-testing in-person requires additional dollars.

But what are your options?

When successfully using 3D Interactive Virtual Technology for training, it’s important to think ahead to testing and evaluation as part of your initial design process.

It makes sense as this training technology may be the only way to have the student perform the procedure without being physically in front of the equipment.

And at Heartwood we have created a robust framework that can:

Provide hints when students need help

Record measurable data like errors made, time to complete and number of hints requested

Evaluate students anywhere, any time and as MANY times as needed via immersive software on laptops and tablets. Scaling your testing is not only affordable – it’s INCLUDED.

Effective assessment, evaluation and testing is built on three major pillars:

1. Performance. Student should perform task unassisted and if/when needed be able to seek help and hints.

2. Remedial action. When wrong or stuck, provide a way to self-correct.

3. Scoring and Evaluation. For self-evaluation and/or sharing results with an instructor.

And interactive assessments capture all three. The video below captures a student performing a procedure virtually and being tested on it:

And when you’re done with testing and evaluations – hold on to that data! Our upcoming post will tell you why it’s valuable and how you can use it to create better training going forward.

How are you evaluating learning now – and does it truly capture what you’d like it to (or better – what it’s capable of capturing)? Reach out here!